5 Things We Learned Walking the GTC 2026 Show Floor

Hundreds of booths. Thousands of demos. Here are the five trends that actually matter.

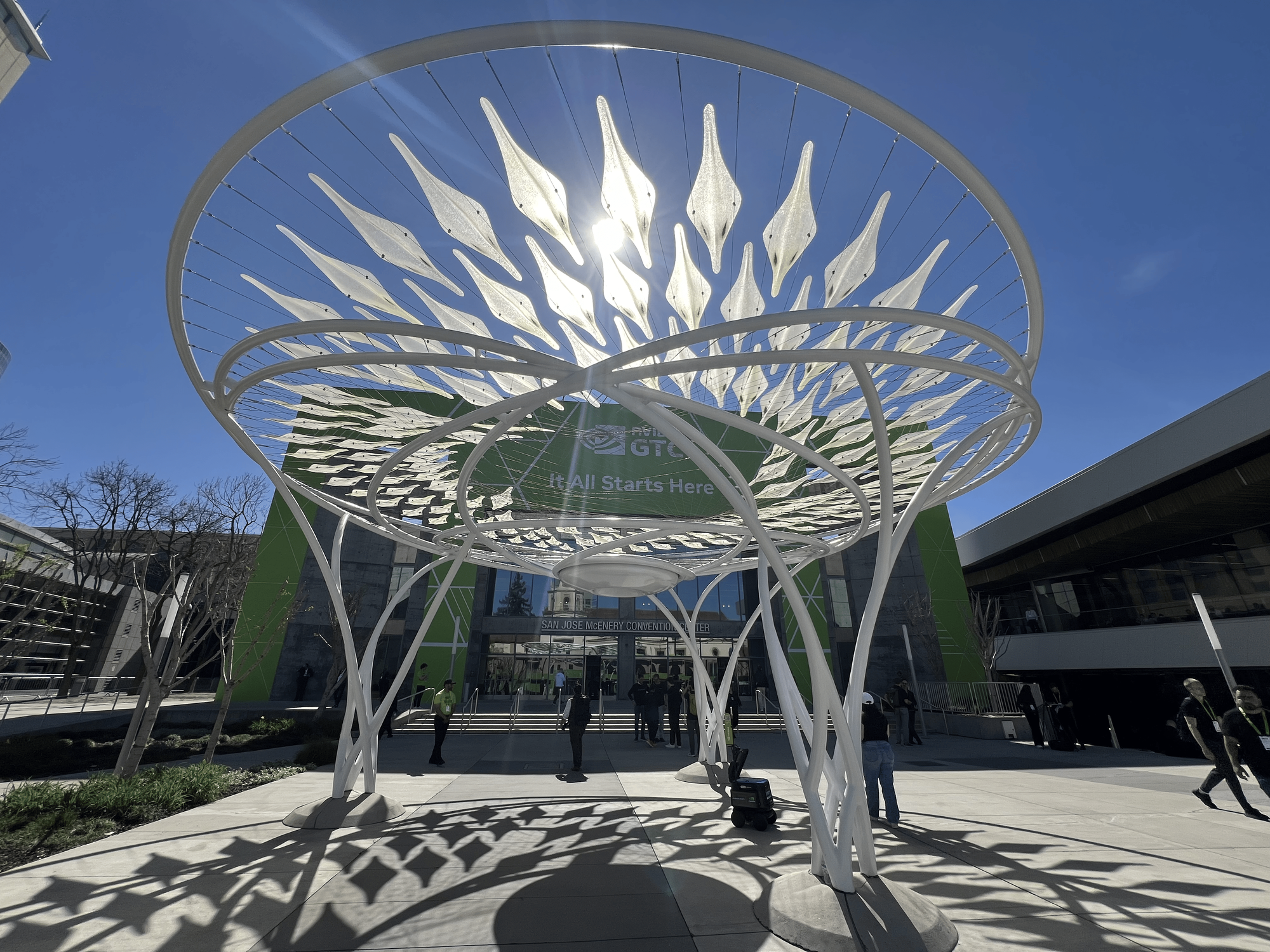

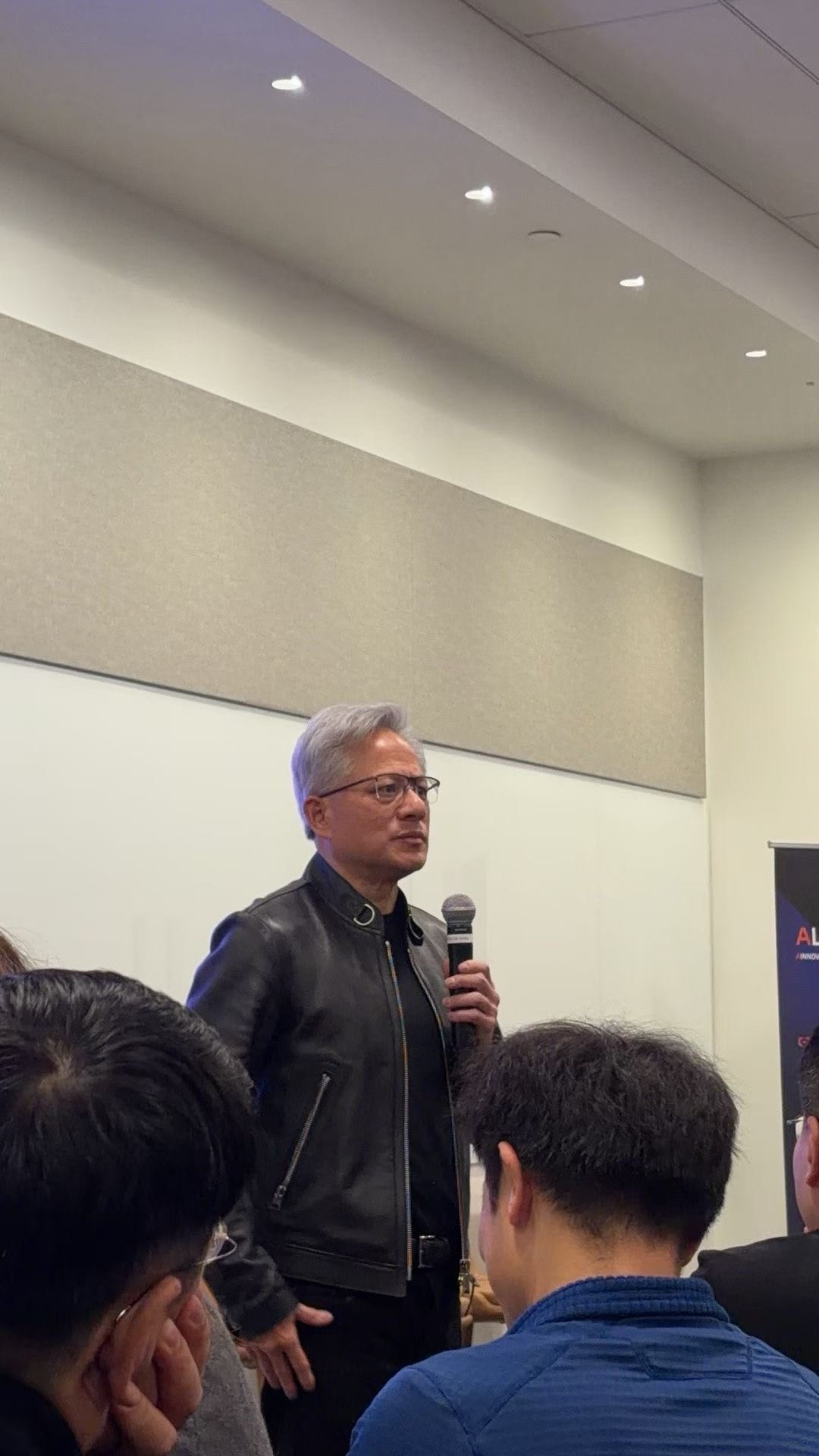

NVIDIA GTC is where the AI industry shows its hand. Not the polished keynote version — the real version, the one you piece together from booth taglines, founder pitches, and the sheer density of startups attacking the same problems. We spent days on the floor this year. Here's what stood out.

1. Inference Dethroned Training as the Main Event

GTC has always been a training story — bigger models, more GPUs, longer runs. Not this year. The center of gravity shifted decisively to inference: how you serve models, not how you build them.

One startup demonstrated persistent KV caching that eliminates redundant computation by up to 10x. Another open-sourced a GPU-native data format that feeds models 20x faster than Parquet and donated it to the Linux Foundation. Multiple booths showcased portable inference hardware — one company literally built a carry-on-sized device packing four H200 GPUs for air-gapped environments.

The economics tell the story. Inference spending is projected to surpass training spend for the first time in 2026. Training happens once; inference happens every time a user sends a message, asks a question, or triggers an AI agent workflow. At scale, inference cost is the product's unit economics.

This is the problem we've been focused on at MegaNova. AI agents — especially those running sustained, multi-step tasks with memory and context — are inference-heavy workloads. Generic clouds treat every API call the same. Purpose-built inference that understands agent workloads is what makes them viable at scale, not just in demos.

2. The Agent Explosion Is Real — But the Infrastructure Isn't Ready

"Agentic AI" dominated the floor. Agent platforms, agent orchestration, agent evaluation, agent deployment — easily a dozen well-funded companies in this space alone. One booth's tagline captured the vibe perfectly: "Build Boring Agents."

The pitch was consistent across booths: enterprises want AI that completes multi-step tasks autonomously — filing reports, processing claims, routing tickets, researching accounts, drafting responses. Reliability is the feature. Several platforms have raised hundreds of millions specifically for agent orchestration and workforce management.

But a pattern emerged that was hard to ignore. Most of these platforms focus on the orchestration layer — how agents are wired together, how workflows are defined, how outputs are evaluated. Far fewer are solving the infrastructure layer underneath: the inference engine that actually powers the agent's thinking, the model serving that handles sustained context across long-running tasks, the cost structure that makes agent-per-employee economics work.

Building an agent is getting easier. Running thousands of them in production, reliably and affordably, is the harder problem — and the one with fewer solutions on the floor.

3. Sovereign AI Went From Buzzword to Buying Criterion

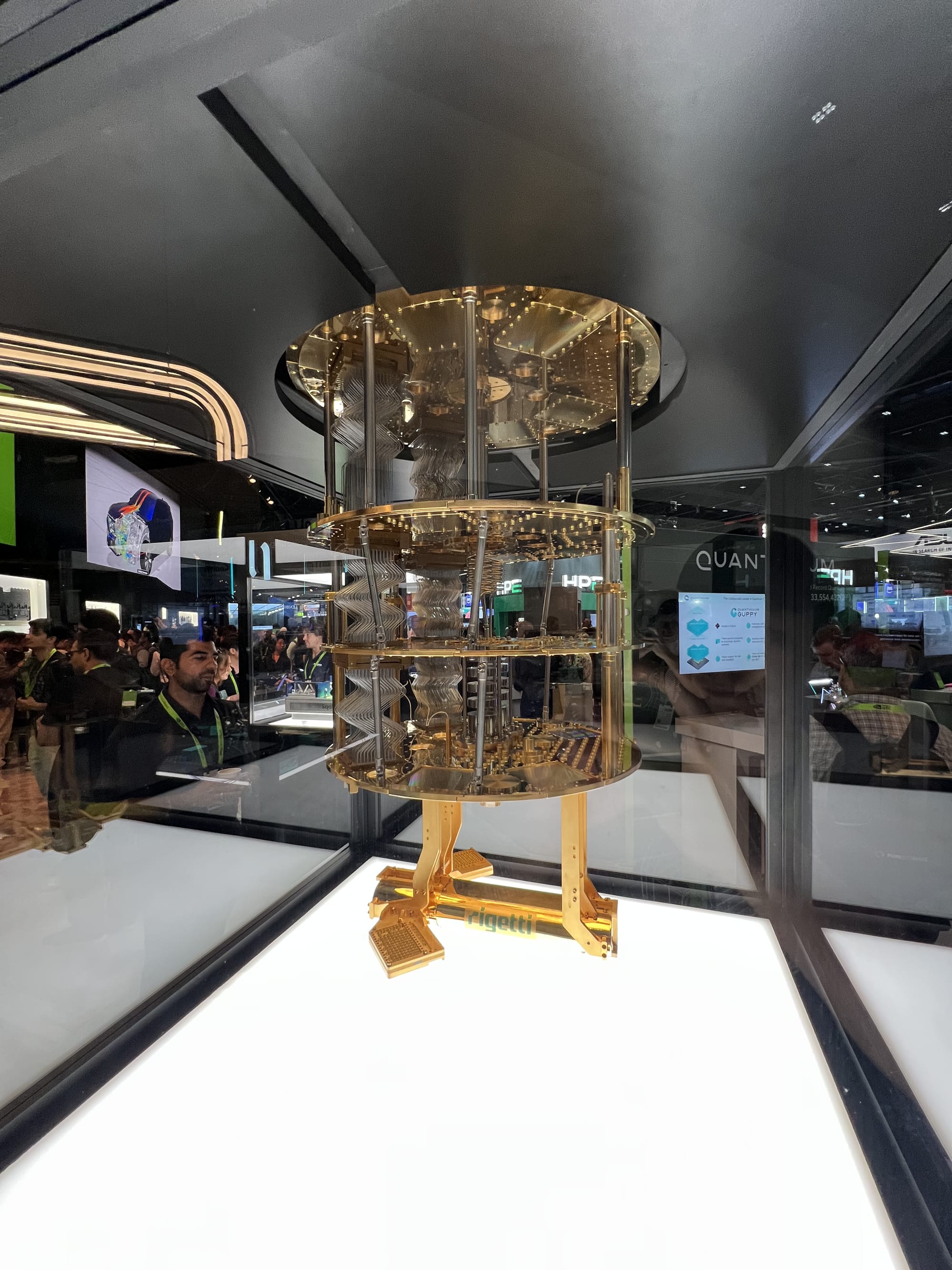

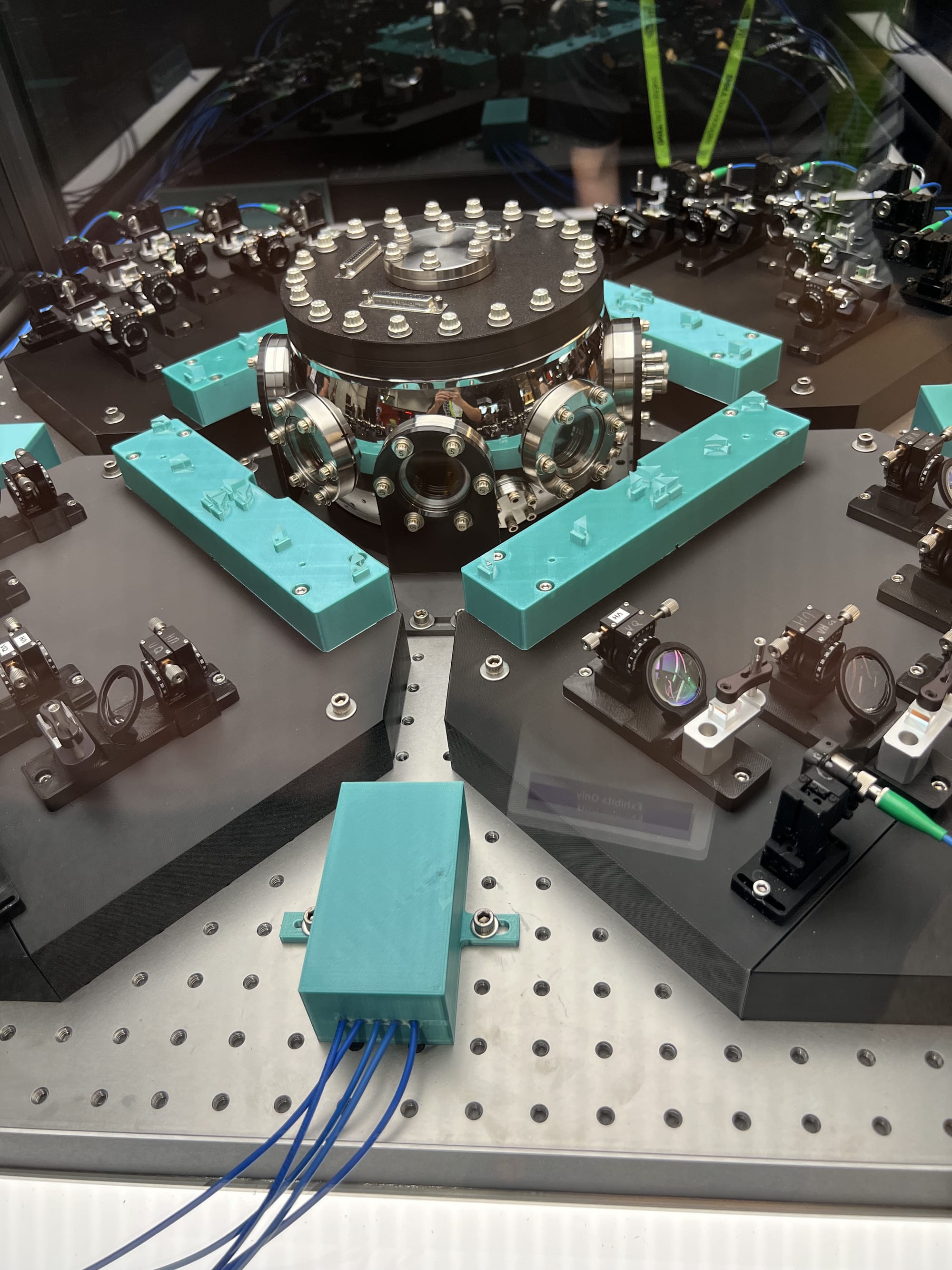

We counted at least fifteen booths explicitly pitching sovereign AI — the ability to run models within jurisdictional boundaries without data leaving controlled environments.

The range was striking. One company operates 500+ edge cloud locations and offers a "Neutral AI Factory" model where MSPs can provide GPU-as-a-Service without building their own infrastructure. A Southeast Asian telco subsidiary is building a 200-megawatt AI grid spanning four countries. The carry-on supercomputer company? Their pitch was "sovereign inference at the edge" for defense and healthcare.

This isn't theoretical. GDPR covers 450 million people. Over 40 countries now have data sovereignty mandates. Sector-specific regulations — HIPAA, financial services compliance, government security requirements — make cloud-only deployment untenable for a growing share of the market.

For AI agents handling enterprise data — customer records, financial transactions, internal communications — sovereignty isn't optional. It's a prerequisite. The platforms that offer flexible deployment without sacrificing performance will win the regulated industries that represent the largest AI budgets.

4. AI Evaluation Became Its Own Category

Some of the most interesting booths weren't building AI. They were testing it.

One platform evaluates AI agents across multi-step workflows with automated scoring, version comparison, and hallucination detection. Another uses statistical anomaly detection on production AI logs — founded by an ex-Intel AI executive who previously sold a Bayesian optimization company. A third has an open-source tracing framework with over 2 million monthly downloads, backed by investors including the venture arms of major observability companies.

The fact that "AI evaluation" is now a funded category tells you something: the industry has moved past "does it work in a demo?" to "does it work in production, reliably, at scale, over time?"

For AI agents, this is especially critical. A chatbot that hallucinates is annoying. An autonomous agent that hallucinates while processing a financial transaction or drafting a customer response is a liability. The evaluation gap — how do you know your agent is actually doing what it should, across thousands of executions? — is one of the biggest unsolved problems in production AI.

5. Everyone Is Building Platforms. Nobody Is Building the On-Ramp.

Here's the meta-trend nobody said out loud.

Walk the GTC floor and you see a clear pattern: billion-dollar platforms, SOC 2 badges, enterprise sales motions, Fortune 500 logos. The AI agent infrastructure being built is overwhelmingly designed for organizations with dedicated engineering teams, months-long implementation cycles, and deep technical expertise.

Meanwhile, the businesses that would benefit most from AI agents — mid-market companies, growing teams, operators who know their workflows intimately but don't have ML engineers on staff — are left choosing between consumer chatbots with no real capability and enterprise platforms they can't deploy without a systems integrator.

The gap isn't in the models or the compute. It's in the creation layer. The ability to go from "I know what this agent should do" to "it's running in production" without a six-month implementation project.

This is the problem MegaNova's AI Employee Creator Studio is designed to solve. Not another orchestration framework for engineers — a creation platform where anyone who understands a workflow can build, customize, and deploy an AI agent that handles it. Backed by an inference cloud built for sustained agent workloads, not repurposed from batch processing.

The models are available. The inference is getting cheaper. What's missing is the bridge between "AI agent" as a concept and "AI employee" as a reality for the businesses that need it most.

The Takeaway

GTC 2026 showed an industry maturing fast. The "generic AI for everything" era is ending. What's replacing it are purpose-built platforms optimized for specific workloads: sovereign inference for regulated industries, agent orchestration for enterprise automation, evaluation tools for production reliability.

The biggest opportunity? Making all of that accessible — not just to the companies that can afford a platform team, but to every business ready to put AI agents to work.

MegaNova is an AI Agent Cloud — purpose-built inference and an AI Employee Creator Studio for businesses ready to deploy AI agents that work. Learn more at meganova.ai

Stay Connected

💻 Website: meganova.ai

🎮 Discord: Join our Discord

👽 Reddit: r/MegaNovaAI

🐦 Twitter: @meganovaai